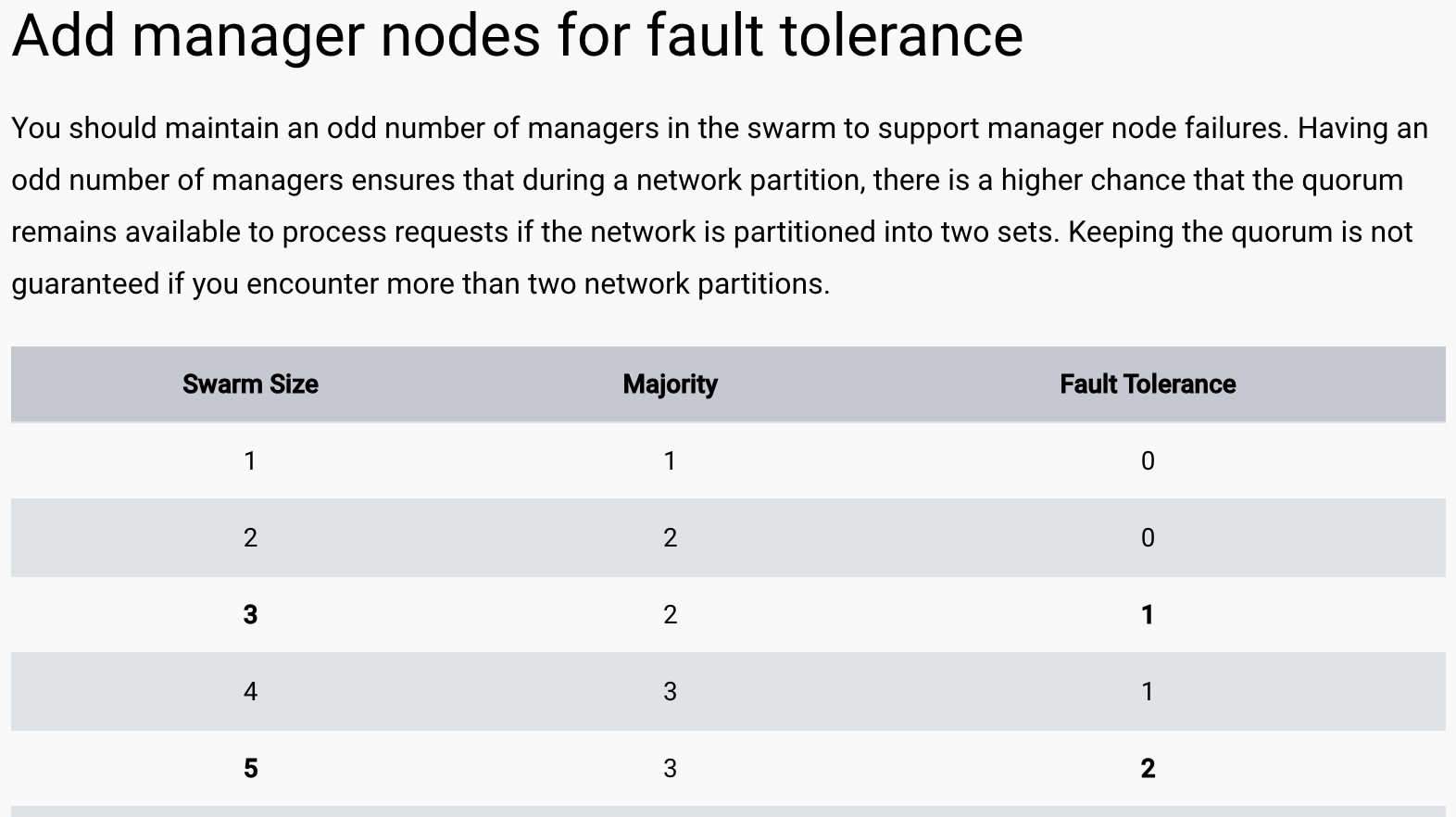

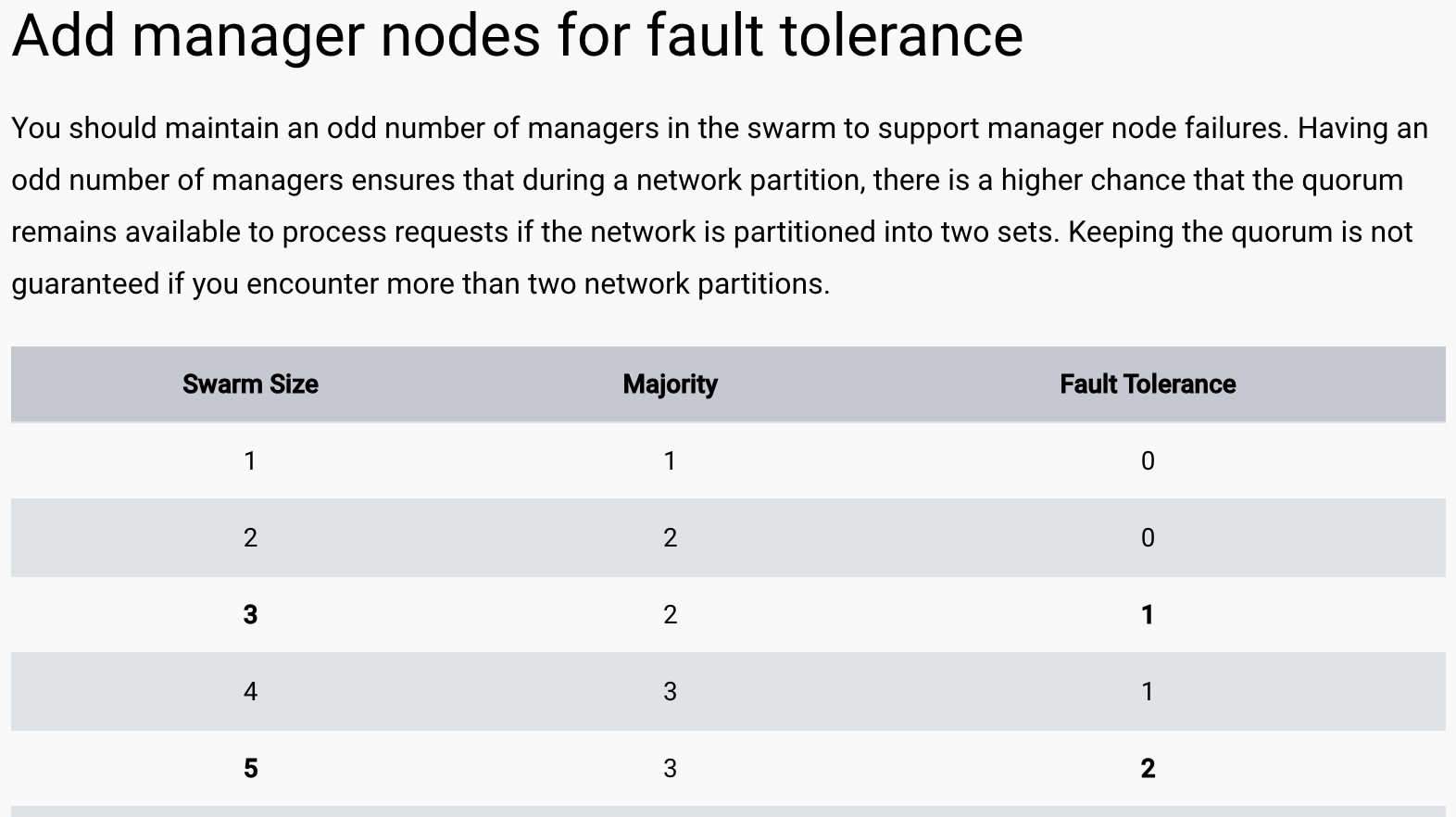

Docker swarm - fault tolerance

https://docs.docker.com/engine/swarm/admin_guide/#add-manager-nodes-for-fault-tolerance

Docker swarm - fault tolerance

https://docs.docker.com/engine/swarm/admin_guide/#add-manager-nodes-for-fault-tolerance

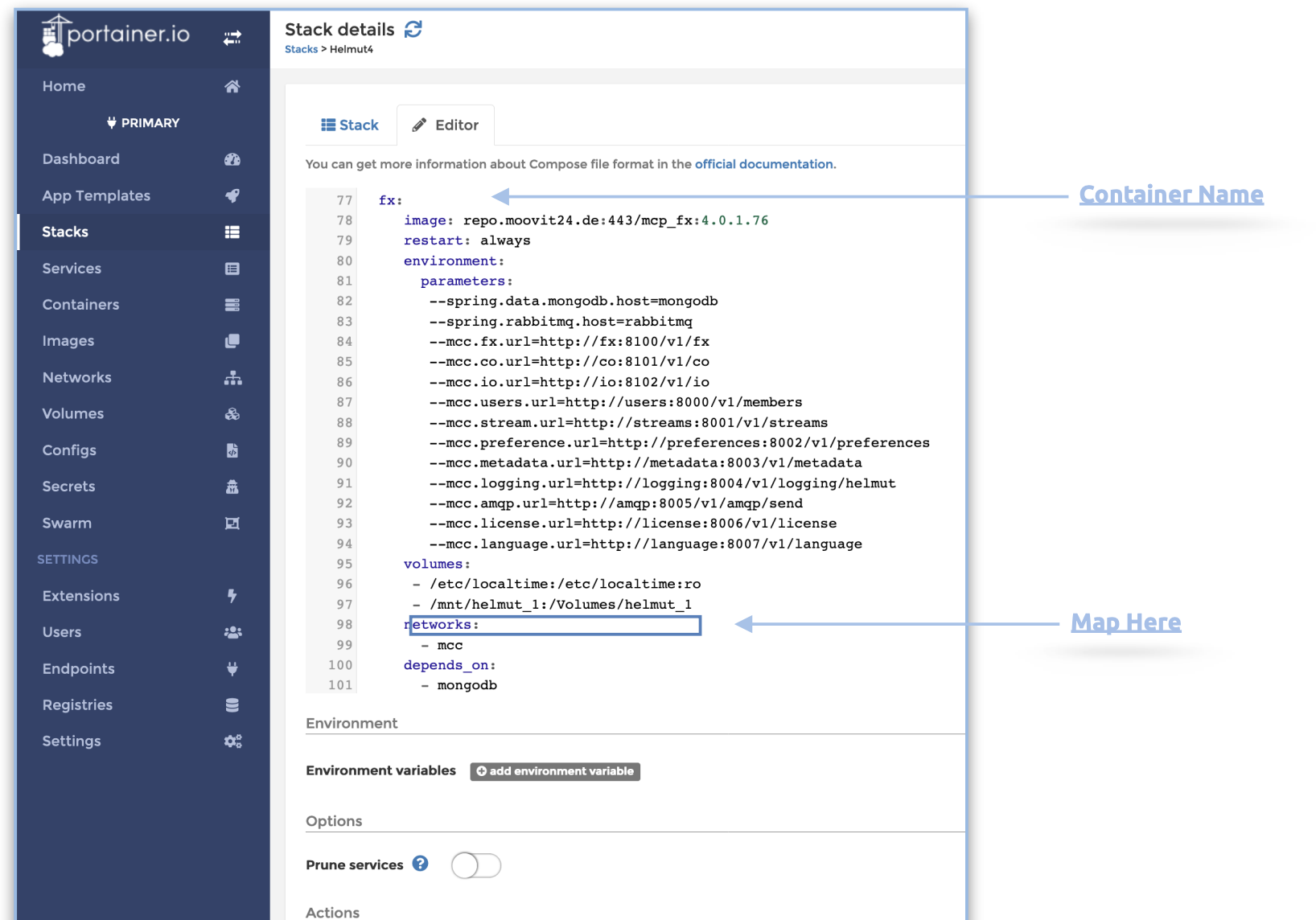

Configure images within stack